The 2026 Strategic Choice: Cloud AI offers instant speed and is often better suited for experimentation, while On-Prem AI provides the long-term data sovereignty and cost control required for core business operations.

Currently, the choice between public cloud and On-Prem hardware is the difference between renting and owning where your firm's intelligence and data reside.

Cloud vs. On-Prem AI Servers: Strategic Comparison

Choosing the right environment depends on your specific workload volume, the sensitivity of your data, and your internal technical capacity.

| Feature | Cloud AI (SaaS/IaaS) | On-Prem (Private) |

|---|---|---|

| Data Privacy | Shared responsibility; processed on 3rd party hardware. High exposure risk. | Full Sovereignty; data never leaves your physical perimeter. |

| Capital Profile | Low operational spend (OpEx); pay-as-you-go per token or hour. | High Capital Asset (CapEx); upfront investment in GPUs and cooling. |

| Long-Term Cost | Can spike with high, consistent daily usage, a variable "Token Tax". | Fixed TCO; hardware ROI in <18 months. |

| Scalability | Instant; spin up 100 GPUs in minutes for big projects. | Planned; requires physical setup (weeks). |

| Speed (Latency) | Dependent on internet (80ms–400ms). | Instant; local network speeds (under 20ms) (dependent on network speed). |

| Maintenance | Managed by Amazon, Google, or Microsoft. | Managed by internal IT or a Managed Contract. |

| Compliance | Third-party certification reliance. | Native Adherence; you own the audit trail to UAE Federal Law No. 45. |

What are the trade‑offs?

The case for cloud

The cloud is ideal for variable workloads, such as a monthly financial audit or seasonal data processing.

The case for On-Prem

On-Prem is becoming the Gold Standard for data-centric businesses. Lately, we are seeing “Inference Inversion”, where it is now 10x cheaper for businesses to run their own models locally, rather than paying a “token tax” to a public API for every query.

AI Infrastructure: 3‑Year Cost‑Benefit Calculator (2026)

The table below shows the Total Cost of Ownership (TCO) across different business scales. More recently, the “break-even” point for On-Prem hardware has dropped significantly due to the high “token tax” of cloud APIs. They have become more cost effective, more readily available, and more efficient Small Language Models (SLMs).

1. The scaling roadmap: cloud vs. On-Prem

The following table compares a standard Cloud Managed Service (GPU‑as‑a‑Service + API fees) against a Private AI Server (CapEx + 3 years of OpEx).

| Business Size | Typical AI Use Case | 3‑Year Cloud Expenditure (OpEx) | 3‑Year Sovereign Investment (CapEx) | Break‑Even | Details |

|---|---|---|---|---|---|

| 1–10 Employees | Basic Research & Search | $18k – $35k | $12k – $22k | 14–18 months | Learn more |

| 11–30 Employees | Workflow & Coding | $65k – $120k | $35k – $55k | 9–12 months | Learn more |

| 31–50 Employees | Custom Private AI Models | $180k – $300k | $85k – $120k | 6–8 months | Learn more |

| 50+ Employees | Enterprise‑wide Agents | $500k+ | $250k+ | < 6 months | Learn more |

2. Choosing your strategy

Tier 1: Growth-Stage (1–10 Employees)

The primary risk at this level is Intellectual Property (IP) leakage. While cloud tools are convenient, processing sensitive client contracts or internal strategy via public APIs creates a permanent digital footprint.

- The Investment: A high-performance Sovereign Workstation.

- The Value: Eliminates monthly "Pro" subscription overhead and secures 100% of your data on-site.

Tier 2: Mid-Market Scale (11–30 Employees)

As your team integrates AI into daily workflows, the "Token Tax" (API fees) often exceeds the cost of financing your own hardware.

- The Investment: A dedicated mid-tier server cluster.

- The Value: Replaces variable monthly cloud bills with a one-time investment that typically breaks even within 9–12 months.

Tier 3: Institutional Mid-Market (31–50 Employees)

At this business size, you are likely no longer using AI just for Q&A; you are likely fine-tuning models on your proprietary data. Cloud providers often impose rate limits or "Priority Pricing" that penalize high-volume users.

- The Investment: Multi-node GPU clusters.

- The Value: Performance consistency. Your team gains a proprietary "Employee Brain" that remains accessible even during global outages.

Tier 4: Enterprise (50+ employees)

For large-scale operations, speed is what affects AI productivity. When 100+ employees hit a public cloud simultaneously, variable lag disrupts the user experience.

- The Investment: Private Data Center or Private Cloud Infrastructure (PCI).

- The Value: Faster response times, and with your AI hosted locally, you will also be UAE AI ACT compliant.

The hidden costs and Savings people forget

While On-Prem hardware is an upfront investment, it eliminates unpredictable "Token Inversion" costs. It is predicted that by 2028, firms owning their hardware will see a 60% lower operational cost compared to those reliant on public API pricing.

When calculating your final numbers, don’t forget these “invisible” On-Prem costs:

- Utility & Cooling: High-performance hardware requires dedicated power (~AED 400–1,800/month depending on Tier).

- Maintenance: We recommend budgeting 10% of the initial CapEx for annual optimization.

- Human Capital: Depending on the complexity of your AI, it could require specialized management, which can be handled by your internal IT team or through our Managed Services Contract.

Future-Proof AI Expansion

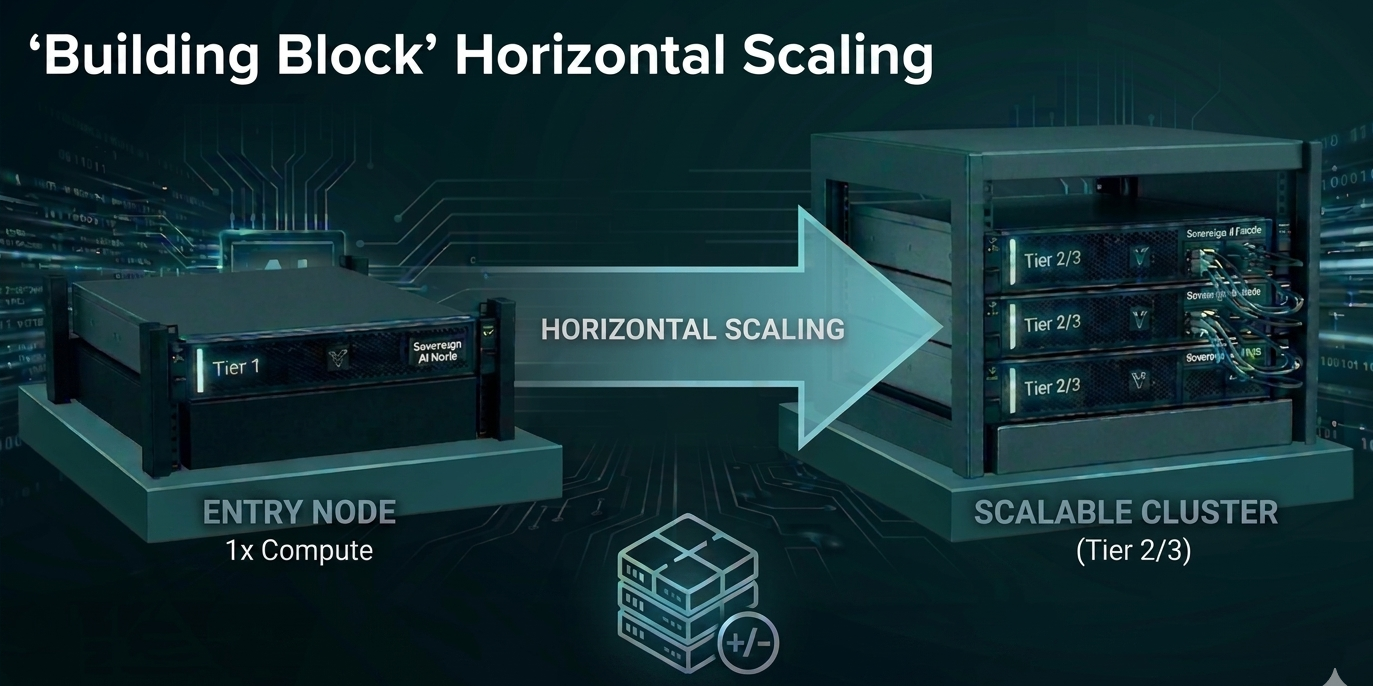

A common misconception is that AI hardware is a fixed investment. However, our Vizion AI architecture is designed for Elastic Growth. As your data volume and headcount increase, your infrastructure evolves with you without requiring full hardware replacement.

How You Scale: The Modular Roadmap

- Horizontal Node Expansion: Our architecture uses a decoupled compute model. When your processing demands double, a new Sovereign Node can be added to your existing rack. Your “Employee Brain” can then scale instantly without downtime.

- The 70% CAPEX Advantage: Avoid over-provisioning on Day 1. Start with the capacity required for your current team size, and then scale your hardware only as ROI is proven. This reduces initial capital risk by up to 70%.

- Small Language Model (SLM) Optimization: We use a high-efficiency model architecture that provides 90% of the capability of a “Giant AI” at 10% of the compute cost. This allows your existing hardware to handle 5x more concurrent users than traditional setups.

- Hybrid-Fluidity: If you have seasonal peaks, a hybrid option may be the right solution for you. Our Bridge Protocol allows you to burst excess workloads into the Sovereign Cloud for 48 hours and then retract them, maintaining a lean On-Prem footprint.

Below is a table that shows the estimated infrastructure your business may require as you scale.

| Milestone | Infrastructure Action | Business Impact |

|---|---|---|

| +50 Employees | Add 1x GPU Node | Maintains sub-20ms latency for all users. |

| +10TB Data | Expand Sovereign Storage | 100% Data Residency remains intact. |

| New Department | Deploy Isolated Logic Plane | “Securely partitions HR, Finance, and R&D data.” |

| Global Expansion | Regional Cloud Bursting | Maintains UAE compliance while serving global leads. |